August 2025 marks a major turning point for artificial intelligence governance in the European Union. New regulatory rules for foundation models have officially taken effect under the EU AI Act’s phased implementation. Foundation models, which underpin a wide variety of AI applications and public sector services, are now subject to enhanced transparency, safety and monitoring obligations. Their scale and influence mean they can no longer be deployed without rigorous oversight.

For governments, regulated industries, law firms and insurers, these new requirements signal the end of the period in which large AI models could operate with minimal scrutiny. Public bodies must now demonstrate a deeper understanding of the models they use, and they must ensure that the systems influencing critical decisions are safe, fair and controllable.

Why Foundation Models Are Now a Regulatory Priority

Foundation models sit at the core of many public sector technologies. They help manage welfare applications, assist with public health forecasting, generate citizen communications, support cybersecurity operations and contribute to legal and policy analysis. Although their general-purpose design makes them flexible and powerful, it also increases the potential for harm. These models can generate false or misleading information, inherit biases from training data or behave unpredictably when manipulated. Their opacity makes it difficult for public institutions to justify AI-driven decisions.

By 2025, regulators recognised that foundational technologies required foundational responsibilities. Ensuring that these models are transparent, explainable and robust has become essential for protecting fundamental rights and maintaining public trust.

What Changes in August 2025?

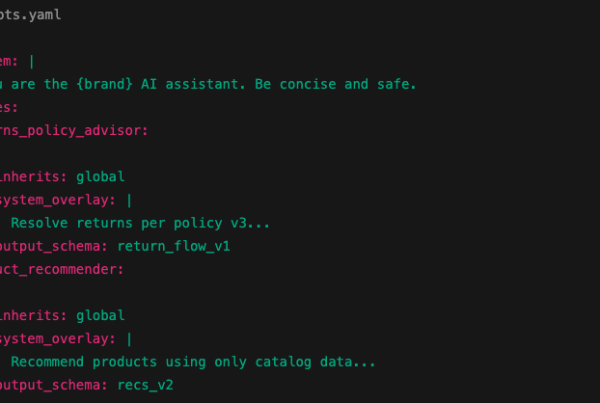

The introduction of the new rules brings several practical obligations for both developers and users of foundation models. Providers must now supply clear documentation describing a model’s capabilities, intended uses and known weaknesses. This information must be sufficiently detailed for public bodies to judge whether a model is suitable for sensitive applications.

Training data transparency also becomes essential. While organisations are not required to expose proprietary datasets, they must describe the categories of data used and explain steps taken to address harmful or biased content. Safety testing becomes a formal requirement, and models must be evaluated under stress to identify behaviours that could lead to misinformation, discrimination or manipulation.

Risk mitigation now extends to real-world use. Developers must show how they prevent misuse, while public sector users must apply safeguards consistently within their own systems. These obligations do not end at deployment. Providers are expected to monitor model behaviour, investigate emerging risks and communicate any concerns to organisations using their technology. Public bodies must conduct ongoing assessments to ensure that the model performs reliably and safely across time.

What the New Rules Mean for Government

Government agencies now need to adopt a much more proactive approach to foundation model governance. Procurement teams must carefully evaluate vendor documentation and ensure that the models embedded in public systems meet EU standards. This may involve new due diligence processes, technical reviews and contractual obligations requiring full compliance from suppliers.

Operational teams must strengthen oversight of AI-driven workflows. Agencies will need audit trails that track how and when foundation models influence decisions, along with monitoring systems that detect drift or unexpected behaviour. These internal processes are essential for public accountability, especially when decisions affect rights, benefits, public safety or access to essential services.

Fairness also becomes a priority. Public bodies must be able to demonstrate that foundation model outputs do not discriminate against individuals or groups. This is particularly important in welfare, employment, law enforcement and financial services, where biased outcomes carry significant social impact.

Explaining decisions remains a core expectation. Agencies must ensure that staff can interpret and communicate how AI contributed to a particular outcome. Foundation model documentation will help, but organisations must invest in capability building to translate technical information into clear explanations for citizens.

How Organisations Can Prepare

To comply with the new rules, organisations must take several strategic steps. They should ensure that all foundation models used within their systems come with complete and accessible documentation. They must develop internal processes that evaluate risks tied to model capabilities and limitations.

Monitoring should become routine, supported by tools that identify irregular outputs or performance degradation. Documentation of AI use must be standard practice so that organisations can respond effectively to regulatory reviews. Human oversight should be strengthened to provide expert intervention when automated outputs appear unreliable or unfair.

These actions require investment, planning and organisational maturity, but they also create a foundation for safer and more trustworthy AI deployment.

How Bold Wave Supports Foundation Model Compliance

Bold Wave AI helps governments, legal teams and insurers meet the demands of the EU’s foundation model regulations. We develop compliant AI solutions designed specifically for public and regulated environments. Our audits assess model safety, fairness, documentation quality and operational risk.

We support organisations in building transparent, explainable systems and establishing governance processes that align with the new EU expectations. Our training programmes help teams understand the implications of foundation model rules and apply them confidently in practice.

By guiding organisations through complex regulatory requirements, Bold Wave ensures that foundation models are deployed responsibly, safely and effectively.